AI Regulation-It's All about Standards

I recently gave a talk to the Engineers' Association of my Alma Mater, Trinity College Cambridge. This is what I said

Video here: https://www.youtube.com/watch?v=2Wnf97_Zu5E

You may ask how and why I have been sucked into the world of AI. Well, 8 years ago I set up a cross-party group in the UK parliament because i thought parliamentarians didn’t know enough about it and then- based on, in the Kingdom of the Blind the fact that one eyed man is king- I was asked to chair the House of Lords Special Enquiry Select Committee on AI with the remit “to consider the economic, ethical and social implications of advances in artificial intelligence. This produced its report “AI in the UK: Ready Willing and Able?” in April 2018. It took a close look at government policy towards AI and its ambitions in the very early days of its policy-making when the UK was definitely ahead of the pack.

Since then I have been lucky enough to have acted as an adviser to the Council of Europe’s working party on AI (CAHAI) the One AI Group of OECD AI Experts and helped establish the OECD Global Parliamentary Network on AI which helps in tracking developments in AI and the policy responses to it, which come thick and fast.

Artificial Intelligence presents opportunities in a whole variety of sectors. I am an enthusiast for the technology -the opportunities for AI are incredibly varied-and I recently wote an upbeat piece on the way that AI is already transforming healthcare.

Many people find it unhelpful to have such a variety of different types of machine learning, algorithms, neural networks, or deep learning, labelled AI. But the expression has been been used since John McCarthy invented it in 1956 and I think we are stuck with it!

Nowadays barely a day goes by without some reference to AI in the news media-particularly some aspect of Large Language Models in the news. We saw the excitement over ChatGPT from Open AI and AI text to image applications such as DALL E and now we have GPT 4 from OpenAI, LlaMa from Meta, Claude from Anthropic, Gemini from Google, Stability Diffusion from Stability AI, Co-pilot from Microsoft, Cohere, Midjourney, -a whole eco system of LLM’s of various kinds.

Increasingly the benefits are not just seen around increasing efficiency, speed etc in terms of analysis, pattern detection and ability to predict but now, with generative AI much more about what creatively AI can add to human endeavour , how it can augment what we do.

But things can go wrong. This isn’t just any old technology.The degree of autonomy, its very versatility, its ability to create convincing fakes, lack of human intervention, the Black box nature of some systems makes it different from other tech. The challenge is to ensure that AI is our servant not our master especially before the advent of AGI.

Failure to tackle issues such as bias/discrimination, deepfakery and disinformation, and lack of transparency will lead to a lack of public/consumer trust, reputational damage and inability to deploy new technology. Public trust and trustworthy AI is fundamental to continued advances in technology.

It is clear that AI even in its narrow form will and should have a profound impact on and implications for corporate governance in terms of the need to ensure responsible or ethical AI adoption.The AI Safety Conference at Bletchley Park-where incidentally my parents met- ended with a voluntary corporate pledge.

This means a more value driven approach to the adoption of new technology needs to be taken. Engagement from boards through governance right through to policy implementation is crucial. This is not purely a matter that can be delegated to the CTO or CIO.

It means in particular assessing the ethics of adoption of AI and the ethical standards to be applied corporately : It may involve the establishment of an ethics advisory committee.It certainly involves clear Board accountability..

We have a pretty good common set of principles -OECD or G20- which are generally regarded as the gold standard which can be adopted which can help us ensure

- Quality of training data

- Freedom from Bias

- The impact on Individual civil and human rights

- Accuracy and robustness

- Transparency and Explainability which of course include the need for open communication where these technologies are deployed.

And now we have the G7 principles for Organizations Developing Advanced AI systems to back those up.

Generally in business and in the tech research and development world I think there is an appetite for adoption of common standards which incorporate ethical principles such as for

- Risk management

- Impact assessment

- Testing

- AI audit

- Continuous Monitoring

And I am optimistic that common standards can be achieved internationally in all these areas. The OECD Internationally is doing a great deal to scope the opportunity and enable convergence. Our own AI Standards Hub run by the Alan Turing institute is heavily involved. As is NIST in the US and the EU’s CEN-CENELEC standards bodies too.

Agreement on the actual regulation of AI in terms of what elements of governance and application of standards should be mandatory or obligatory, however, is much more difficult.

In the UK there are already some elements of a legal framework in place. Even without specific legislation, AI deployment in the UK will interface with existing legislation and regulation in particular relating to

- Personal data under UK GDPR

- Discrimination and unfair treatment under the Human Rights Act and Equality Act

- Product safety and public safety legislation

- And various sector-specific regulatory regimes requiring oversight and control by persons undertaking regulated functions, the FCA for financial services, Ofcom in the future for social media for example.

But when it comes to legislation and regulation that is specific to AI such over transparency and explanation and liability that’s where some of the difficulties and disagreements start emerging especially given the UK’s approach in its recent White Paper and the government’s response to the consultation.

Rather than regulating in the face of clear current evidence of the risk of the many uses and forms of AI it says it’s all too early to think about tackling the clear risks in front of us. More research is needed. We are expected to wait until we have complete understanding and experience of the risks involved. Effectively in my view we are being treated as guinea pigs to see what happens whilst the government talks about the existential risks of AGI instead.

And we shouldn’t just focus on existential long term risk or indeed risk from Frontier AI, predictive AI is important too in terms of automated decision making, risk of bias and lack of transparency.

The government says it wishes its regulation to be innovation friendly and context specific but sticking to their piecemeal context specific approach the government are not suggesting immediate regulation nor any new powers for sector regulators

But regulation is not necessarily the enemy of innovation, it can in fact be the stimulus and be the key to gaining and retaining public trust around digital technology and its adoption so we can realise the benefits and minimise the risks.

The recent response to the AI White paper has demonstrated the gulf between the government’s rhetoric about being world leading in safe AI.

In my view we need a broad definition of AI and early risk based overarching horizontal legislation across the sectors ensuring that there is conformity with standards for a proper risk management framework and impact assessment when AI systems are developed and aded.

Depending on the extent of the risk and impact assessed, further regulatory requirements would arise. When the system is assessed as high risk there would be additional requirements to adopt standards of testing, transparency and independent audit.

What else is on my wish list? As regards its use of AI and automated decision making systems the government needs to firmly implant its the Algorithmic Transparency Recording Standard alongside risk assessment together with a public register of AI systems in use in government.

It also needs need to beef up the Data Protection Bill in terms of rights of data subjects relative to Automated Decision Making rather than water them down and retain and extend the Data Protection Impact Assessment and DPO for use in AI regulation.

I also hope the Gov will take strong note of the House of Lords report on the use of copyrighted works by LLM’s. The government has adopted its usual approach of relying on a voluntary approach. But it is clear that this is simply is not going to work. It needs to act decisively to make sure that these works are not ingested into training LLM’s without any return to rightsholders.

Luckily others such as the EU-and even the US- contrary to many forecasts are grasping the nettle. The EU’s AI Act is an attempt to grapple with the here and now risks in a constructive way and even the US where the White House Executive Order and Congressional bi-partisan proposals show a much more proactive approach.

But the more we diverge when it comes to regulation from other jurisdictions the more difficult it gets for UK developers and those who want to develop AI systems internationally.

International harmonization, interoperability or convergence, call it what you like, is in my view essential if we are to see developers able to commercialize their products on a global basis, assured that they are adhering to common ethical standards of regulation.This means working with the BSI ISO OECD and others towards convergence of international standards.There are already several existing standards such as ISO 42001 and 42006 and NIST’s RMF which can form the basis for this

What I have suggested I believe would help provide the certainty, convergence and consistency we need to develop and invest in responsible AI innovation in the UK more readily. That in my view is the way to get real traction!

Great prospects but ….. The potential and challenges for AI in healthcare.

I recently wrote a piece on AI in healthcare for the Journal of the Apothecaries Livery Company, which has among its membership a great many doctors and health specialists. This is what I said.

In our House of Lords AI Select Committee report “AI in the UK: Ready Willing and Able?” back in 2018, reflecting the views of many of our witnesses about its likely profound impact, we devoted a full chapter to the potential for AI in its application to healthcare. Not long afterwards the Royal College of Physicians itself made several far-sighted recommendations relating to the incentives, scrutiny and regulation needed for AI development and adoption.2

At that time it was already clear that medical imaging and supporting administrative roles were key areas for adoption. Fast forward 5 years to the current enquiry- “Future Cancer-exploring innovations in cancer diagnosis and treatment”- by the House of Commons Health and Social Care Select Committee and the application of different forms of AI is very much already here and now in the NHS.

It is evident that this is a highly versatile technology. The Committee heard in particular from GRAIL Bio UK about its Galleri AI application which has the ability to detect a genetic signal that is shared by over 50 different types of incipient cancer, particularly more aggressive tumours.3 Over the past year, we have heard of other major breakthroughs —tripling stroke recovery rates with Brainomix4, mental health support through the conversational AI application Wysa5 and Eye2Gene a decision support system with genetic diagnosis of inherited retinal disease, and applications for remotely managing conditions at home.6 Mendelian has developed an AI tool, piloting in the NHS, to interrogate large volumes of electronic patient records to find people with symptoms that could be indicative of a rare disease.6A

We have seen the introduction of Frontier software designed to ease bed blocking by improving the patient discharge process.7 And just a few weeks ago we heard of how, using AI, Lausanne researchers have created a digital bridge from the brain and implanted spine electrodes which allow patients with spinal injuries to regain coordination and movement.8 It is also clear despite the recent fate of Babylon Health 9 that consumer AI-enabled health apps and devices can have a strong future too in terms of health monitoring and self-care. We now have large language models such as Med-PaLM developed by Google research which are designed to designed to provide high quality answers to medical questions. 9A

We are seeing the promise of the use of AI in training surgeons for more precise keyhole brain surgery.

Now it seems just around the corner could be foundation models for generalist medical artificial intelligence which are trained on massive, diverse datasets and will be able to perform a very wide range of tasks based on a broad range of data such as images, electronic health records, laboratory results, genomics, graphs or medical text and to provide communicate directly with patients. 10

We even have the promise of Smartphones being able to detect the onset of dementia 10A

Encouragingly—whatever one’s view of the current condition more broadly of the Health Service—successive Secretaries of State for Health have been aware of the potential and have responded by investing. Over the past few years, the Department of Health and the NHS have set up a number of mechanisms and structures designed to exploit and incentivize the development of AI technologies. The pandemic, whilst diminishing treatment capacity in many areas, has also demonstrated that the NHS is capable of innovation and agile adoption of new technology.

Its performance has yet to be evaluated, but through the NHS AI Lab set up in 2019 11, designed to accelerate the safe, ethical and effective adoption of AI in health and social care, with its AI in Health and Care Awards over the past few years, some £123 million has been invested in 86 different AI technologies, including stroke diagnosis, cancer screening, cardiovascular monitoring, mental health, osteoporosis detection, early warning and clinician support tools for obstetrics applications for remotely managing conditions at home. 11

This June the Government announced a new £21 million AI Diagnostic Fund to accelerate deployment of the most promising AI decision support tools in all 5 stroke networks covering 37 hospitals by the end of 2023, given results showing more patients being treated, more efficient and faster pathways to treatment and better patient outcomes.12

In the wider life sciences research field—which our original Lords enquiry dwelt on less—there has been ground-breaking research work by DeepMind in its Alphafold discovery of protein structures13 and Insilico Medicine’s use of generative AI for drug discovery in the field of idiopathic pulmonary treatment which it claims saved 2 years in the route to market.14 GSK has developed a large language model Cerebras to analyse the data from genetic databases which it means can take a more predictive approach in drug discovery .15

Despite these developments as many clinicians and researchers have emphasized—not least recently to the Health and Social Care Committee—the rate of adoption of AI is still too slow.

There are a variety of systemic issues in the NHS which still need to be overcome. We lag far behind health systems such as Israel’s, pioneers of the virtual hospital16, and Estonia.17

The recent NHS Long Term Workforce Plan 18 rightly acknowledges that AI in augmenting human clinicians will be able to greatly relieve pressures on our Health Service, but one of the principal barriers is a lack of skills to exploit and procure the new technologies, especially in working alongside AI systems. As recognized by the Plan, the introduction of AI into potentially so many healthcare settings has huge implications for healthcare professions especially in terms of the need to strike a balance between AI assistance/human augmentation and substitution with all its implications for future deskilling.

Addressing this in itself is a digital challenge that the NHS Digital Academy—a virtual academy set up in 2018 19- was designed to solve. These issues were tackled by the Topol Review “Preparing the Healthcare Workforce to Deliver the Digital Future”, instituted by Jeremy Hunt when Health Secretary, and reported in February 2019 20. Above all, it concluded that NHS organisations will need to develop an expansive learning environment and flexible ways of working that encourage a culture of innovation and learning. Similarly the review by Sir Paul Nurse of the research, development and innovation organisational landscape 21 highlighted a skills and training gap across these different areas and siloed working. The current reality on the ground however is that the adoption of AI and digital technology still does not feature in workforce planning and is not reflected in medical training which is very traditional in its approach.

More specific AI-related skills are being delivered through the AI Centre for Value-based Healthcare. This is led by King’s College London and Guy's and Thomas’ NHS Foundation Trust, alongside a number of NHS Trusts, Universities and UK and multinational industry partners.22 Funded by grants from UK Research and Innovation (UKRI) and the Department of Health and Social Care (DHSC) and the Office of Life Sciences their Fellowship in Clinical AI is a year-long programme integrated part-time alongside the clinical training of doctors and dentists approaching consultancy. This was first piloted in London and the South East in 2022 and is now being rolled out more widely but although it has been a catalyst for collaboration it has yet to make an impact at any scale. The fact remains that outside the major health centres there is still insufficient financial or administrative support for innovation.

Set against these ambitions many NHS clinicians complain that the IT in the health service is simply not fit for purpose. This particularly applies in areas such as breast cancer screening.

One of the key areas where developers and adopters can also find frustrations is in the multiplicity of regulators and regulatory processes. Reports such as the review by Professor Dame Angela McLean the Government's Chief Scientific Adviser on the Pro Innovation Regulation of Technologies-Life Sciences 23 identified blockages in the regulatory process for the “innovation pathway”. At the same time however we need to be mindful of the patient safety findings— shocking it must be said—of the Cumberledge Review—the independent Medicines and Medical Device Safety Review.24

To streamline the AI regulatory process the NHS AI Lab has set up the AI and Digital Regulations Service (formerly the multi-agency advisory service) which is a collaboration between 4 regulators: The National Institute for Health and Care Excellence, The Medicines and Healthcare Products Regulatory Agency (the MHRA), The Health Research Authority and The Care Quality Commission.25

The MHRA itself through its Software and AI as a Medical Device Change Programme Roadmap is a good example of how an individual health regulator is gearing up for the AI regulatory future, the intention being to produce guidance in a variety of areas, including bringing clarity to distinctions such as software as a medical device versus wellbeing and lifestyle software products and versus medicines and companion diagnostics.26

Specific regulation for AI systems is another factor that healthcare AI developers and adopters will need to factor in going forward. There are no current specific proposals in the UK but the EU’s AI Act will set up a regulatory regime which will apply to high-risk AI systems and applications which are made available within the EU, used within the EU or whose output affects people in the EU.

Whatever regulatory regime applies the higher the impact on the patient an AI application has, the stronger the need for clear and continuing ethical governance to ensure trust in its use, including preventing potential bias, ensuring explainability, accuracy, privacy, cybersecurity, and reliability, and determining how much human oversight should be maintained. This becomes of even greater importance in the long term if AI systems in healthcare become more autonomous.27

In particular AI in healthcare will not be successfully deployed unless the public is confident that its health data will be used in an ethical manner, is of high quality, assigned its true value, and used for the greater benefit of UK healthcare. The Ada Lovelace Institute in conjunction with the NHS AI Lab, has developed an algorithmic impact assessment for data access in a healthcare environment which demonstrates the crucial risk and ethical factors that need to be considered in the context of AI development and adoption .28

For consumer self-care products explainability statements, of the kind developed by Best Practice AI for Heathily’s AI smart symptom checker, which provides a non-technical explanation of the app to its customers, regulators and the wider public will need to become the norm.29 We have also just recently seen the Introduction of British Standard 30440 designed as a validation framework for the use of AI in healthcare 30, All these are steps in the right direction but there needs to be regulatory incentivisation of adoption and compliance with these standards if the technology is to be trustworthy and patients to be safe.

The adoption of and alignment with global standards is a governance requirement of growing relevance too. The WHO in 2021 produced important guidance on the Governance of Artificial Intelligence for Health31

Issues relating to patient data access, which is so crucial for research and training of AI systems, in terms of public trust, procedures for sharing and access have long bedevilled progress on AI development. This was recognized in the Data Saves Lives strategy of 2022 32 and led to the Goldacre Review which reported earlier this year. 33

As a result, greater interoperability and a Federated Data Platform comprised of Secure Research Environments is now emerging with greater clarity on data governance requirements which will allow researchers to navigate access to data more easily whilst safeguarding patient confidentiality.

All this links to barriers to the ability of researchers and developers to conduct clinical trials which has been recognized as a major impediment to innovation. The UK has fallen behind in global research rankings as a result. The O’Shaughnessy review on clinical trials which reported earlier this year made a number of key recommendations 34

-A national participatory process on patient consent to examine how to achieve greater data usage for research in a way that commands public trust. Much greater public communication and engagement on this has long been called for by the National Data Guardian.

-Urgent publication of guidance for NHS bodies on engaging in research with industry. This is particularly welcome. At the time of our Lords report, the Royal Free was criticized by the Information Commissioner for its arrangement with DeepMind which developed its Streams app to diagnose acute kidney injury, as being in breach of data protection law,35 Given subsequent questionable commercial relationships which have been entered into by NHS bodies, a standard protocol for the use of NHS patient data for commercial purposes, ensuring benefit flows back into the health service has long been needed and is only just now emerging.

-Above all, it recommended a much-needed national directory of clinical trials to give much greater visibility to national trials activity for the benefit of patients, clinicians, researchers and potential trial sponsors.

Following the Health and Care Act of 2022, the impact of the recent reorganisation and merger of NHS X and NHS Digital into the Transformation Directorate of NHS England, the former of which was specifically designed when set up in 2019 to speed up innovation and technology adoption in the NHS, is yet to be seen, but clearly, there is an ambition for the new structure to be more effective in driving innovation.

The pace of drug discovery through AI has undoubtedly quickened over recent years but there is a risky and rocky road in the AI healthcare investment environment. Adopting AI techniques for drug discovery does not necessarily shortcut the uncertainties. The experience of drug discovery investor Benevolent AI is a case in point which recently announced that it would need to shed up to 180 staff out of 360.36

Pharma companies are adamant too that in the UK the NHS branded drug pricing system is a disincentive to drug development although it remains to be seen what the newly negotiated voluntary and statutory agreements will deliver.

To conclude, expectations of AI in healthcare are high but where is the next real frontier and where should we be focusing our research and development efforts for maximum impact? Where are the gaps in adoption?

It is still the case that much unrealized AI potential lies in some of the non-clinical healthcare aspects such as workforce planning and mapping demand to future needs. This needs to be allied with day to day clinical AI predictive tools for patient care which can link data sets and combine analysis from imaging and patient records.

In my view too, of even greater significance than improvements in diagnosis and treatment, is a new emphasis on a preventative philosophy through the application of predictive AI systems to genetic data. As a result, long term risks can be identified and appropriate action taken to inform patients and clinicians about the likelihood of their getting a particular disease including common cancer types.

Our Future Health is a new such project working in association with the NHS. 37 The plan (probably overambitious and not without controversy in terms of its intention to link into government non health data) is to collect the vital health statistics of 5 million adult volunteers.

With positive results from this kind of genetic work, however, AI in primary care could come into its own, capturing the potential for illness and disease at a much earlier stage. This is where I believe that ultimately the greatest potential arises for impact on our national health and the opportunity for greater equity in life expectancy across our population lies. Alongside this, however, the greatest care needs to be taken in retaining public trust about personal data access and use and the ethics of AI systems used.

Footnotes

1.AI in the UK : Ready Willing and Able? House of Lords AI Select Committee 2018 Artificial Intelligence Committee - Summary

2. Royal College of Physicians, Artificial intelligence in healthcare Report of a working party, https://www.rcplondon.ac.uk/projects/outputs/artificial-intelligence-ai-health

3. House of Commons Health and Social Care Committee, Future cancer - Committees

4. Brainomix’s e-Stroke Software Triples Stroke Recovery Rates,https://www.brainomix.com/news/oahsn-interim-report/

5. Evidence-based Conversational AI for Mental Health Wysa

6A https://www.newstatesman.com/spotlight/healthcare/innovation/2023/10/ai-diagnosis-technology-artificial-intelligence-healthcare

7.AI cure for bed blocking can predict hospital stay

8.Clinical trial evaluates implanted tech that wirelessly stimulates spinal cord to restore movement after paralysis, Walking naturally after spinal cord injury using a brain–spine interface

9.Babylon the future of the NHS, goes into administration

9A https://sites.research.google/med-palm/

10. Foundation models for generalist medical artificial intelligence | Nature

10 A. https://www.thetimes.co.uk/article/ai-could-detect-dementia-long-before-doctors-claims-oxford-professor-slbdz70v3#:~:text=Michael%20Wooldridge%2C%20a%20professor%20of,possible%20sign%20of%20the%20condition.

11..The Artificial Intelligence in Health and Care Award - NHS AI Lab programmes

12.NHS invests £21 million to expand life-saving stroke care app, https://www.gov.uk/government/news/21-million-to-roll-out-artificial-intelligence-across-the-nhs

13.DeepMind, AlphaFold: a solution to a 50-year-old grand challenge in biology, 2020. AlphaFold: a solution to a 50-year-old grand challenge in biology

14.From Start to Phase 1 in 30 Months | Insilico Medicine

15.GlaxoSmithKline and Cerebras are Advancing the State of the Art in AI for Drug Discoveryl

16.Israeli virtual hospital is caring for Ukrainian refugees - ISRAEL21c

17. Estonia embraces new AI-based services in healthcare.

18. NHS Long Term Workforce Plan

20. The Topol Review: Preparing the Healthcare Workforce to Deliver the Digital Future

21.The Nurse Review: Research, development and innovation (RDI) organisational landscape: an independent review - GOV.UK

22. The AI Centre for Value Based Healthcare

23 Pro-innovation Regulation of Technologies Review Life Sciences - GOV.UK

24 Department of Health and Social Care, First Do No Harm – The report of the Independent Medicines and Medical Devices Safety Review, 2020. The report of the IMMDSReview

25. AI and digital regulations service - AI Regulation - NHS Transformation Directorate

26. Software and AI as a Medical Device Change Programme - Roadmap - GOV.UK

27. AI Act: a step closer to the first rules on Artificial Intelligence | News | European Parliament

28. Algorithmic impact assessment in healthcare | Ada Lovelace Institute

31. Ethics and governance of artificial intelligence for health

32. Data saves lives: reshaping health and social care with data - GOV.UK

33. Goldacre Review

34. Commercial clinical trials in the UK: the Lord O’Shaughnessy review - final report - GOV.UK

35 .Royal Free breached UK data law in 1.6m patient deal with Google's DeepMind.

36. BenevolentAI cuts half of its staff after drug trial flop

Lord C-J calls for action on AI regulation and data rights in Kings Speech Debate

I want to start on a positive note by celebrating the recent Royal Assent of the Online Safety Act and the publication of the first draft code for consultation. I also very much welcome that we now have a dedicated science and technology department in the form of DSIT, although I very much regret the loss of Minister George Freeman yesterday.

Sadly, there are many other less positive aspects to mention. Given the Question on AI regulation today, all I will say is that despite all the hype surrounding the summit, including the PM’s rather bizarre interview with Mr Musk, in reality the Government are giving minimal attention to AI, despite the Secretary of State saying that the world is standing at the inflection point of a technological revolution. Where are we on adjusting ourselves to the kinds of risk that AI represents? Is it not clear that the Science, Innovation and Technology Committee is correct in recommending in its interim report that the Government

“accelerate, not … pause, the establishment of a governance regime for AI, including whatever statutory measures as may be needed”?

That is an excellent recommendation.

I also very much welcome that we are rejoining Horizon, but there was no mention in the Minister’s speech of how we will meet the challenge of getting international research co-operation back to where it was. I am disappointed that the Minister did not give us a progress update on the department’s 10 objectives in its framework for science and technology, and on action on the recommendations of its many reviews, such as the Nurse review. Where are the measurable targets and key outcomes in priority areas that have been called for?

Nor, as we have heard, has there been any mention of progress on Project Gigabit, and no mention either of progress on the new programmes to be undertaken by ARIA. There was no mention of urgent action to mitigate increases to visa fees planned from next year, which the Royal Society has described as “disproportionate” and a “punitive tax on talent”, with top researchers coming to the UK facing costs up to 10 times higher than in other leading science nations. There was no mention of the need for diversity in science and technology. What are we to make of the Secretary of State demanding that UKRI “immediately” close its advisory group on EDI? What progress, too, on life sciences policy? The voluntary and statutory pricing schemes for new medicines currently under consultation are becoming a major impediment to future life sciences investment in the UK.

Additionally, health devices suffer from a lack of development and commercialisation incentives. The UK has a number of existing funding and reimbursement systems, but none is tailored for digital health, which results in national reimbursement. What can DSIT do to encourage investment and innovation in this very important field?

On cybersecurity, the G7 recognises that red teaming, or what is called threat-led penetration testing, is now crucial in identifying vulnerabilities in AI systems. Sir Patrick Vallance’s Pro-innovation Regulation of Technologies Review of March this year recommended amending the Computer Misuse Act 1990 to include a statutory public interest defence that would provide stronger legal protections for cybersecurity researchers and professionals carrying out threat intelligence research. Yet there is still no concrete proposal. This is glacial progress.

However, we on these Benches welcome the Digital Markets, Competition and Consumers Bill. New flexible, pro-competition powers, and the ability to act ex ante and on an interim basis, are crucial. We have already seen the impact on our sovereign cloud capacity through concentration in just two or three US hands. Is this the future of AI, given that these large language models now developed by the likes of OpenAI, Microsoft, Anthropic AI, Google and Meta require massive datasets, vast computing power, advanced semiconductors, and scarce digital and data skills?

As the Lords Communications and Digital Committee has said, which I very much welcome, the Bill must not, however, be watered down in a way that allows big tech to endlessly challenge the regulators in court and incentivise big tech firms to take an adversarial approach to the regulators. In fact, it needs strengthening in a number of respects. In particular, big tech must not be able to use countervailing benefits as a major loophole to avoid regulatory action. Content needs to be properly compensated by the tech platforms. The Bill needs to make clear that platforms profit from content and need to pay properly and fairly on benchmarked terms and with reference to value for end users. Can the Minister, in winding up, confirm at the very least that the Government will not water down the Bill?

We welcome the CMA’s market investigation into cloud services, but it must look broadly at the anti-competitive practices of the service providers, such as vendor lock-in tactics and non-competitive procurement. Competition is important in the provision of broadband services too. Investors in alternative providers to the incumbents need reassurance that their investment is going on to a level playing field and not one tilted in favour of the incumbents. Can the Minister reaffirm the Government’s commitment to infrastructure competition in the UK telecommunications industry?

The Data Protection and Digital Information Bill is another matter. I believe the Government are clouded by the desire to diverge from the EU to get some kind of Brexit dividend. The Bill seems largely designed, contrary to what the Minister said, to dilute the rights of data subjects where it should be strengthening them. For example, there is concern from the National AIDS Trust that permitting intragroup transmission of personal health data

“where that is necessary for internal administrative purposes”

could mean that HIV/AIDS status will be inadequately protected in workplace settings. Even on the Government’s own estimates it will have a minimal positive impact on compliance costs, and in our view it will simply lead to companies doing business in Europe having to comply with two sets of regulation. All this could lead to a lack of EU data adequacy.

The Bill is a dangerous distraction. Far from weakening data rights, as we move into the age of the internet of things and artificial intelligence, the Government should be working to increase public trust in data use and sharing by strengthening those rights. There should be a right to an explanation of automated systems, where AI is only one part of the final decision in certain circumstances—for instance, where policing, justice, health, or personal welfare or finance is concerned. We need new models of personal data controls, which were advocated by the Hall-Pesenti review as long ago

as 2017, especially through new data communities and institutions. We need an enhanced ability to exercise our right to data portability. We need a new offence of identity theft and more, not less, regulatory oversight over use of biometrics and biometric technologies.

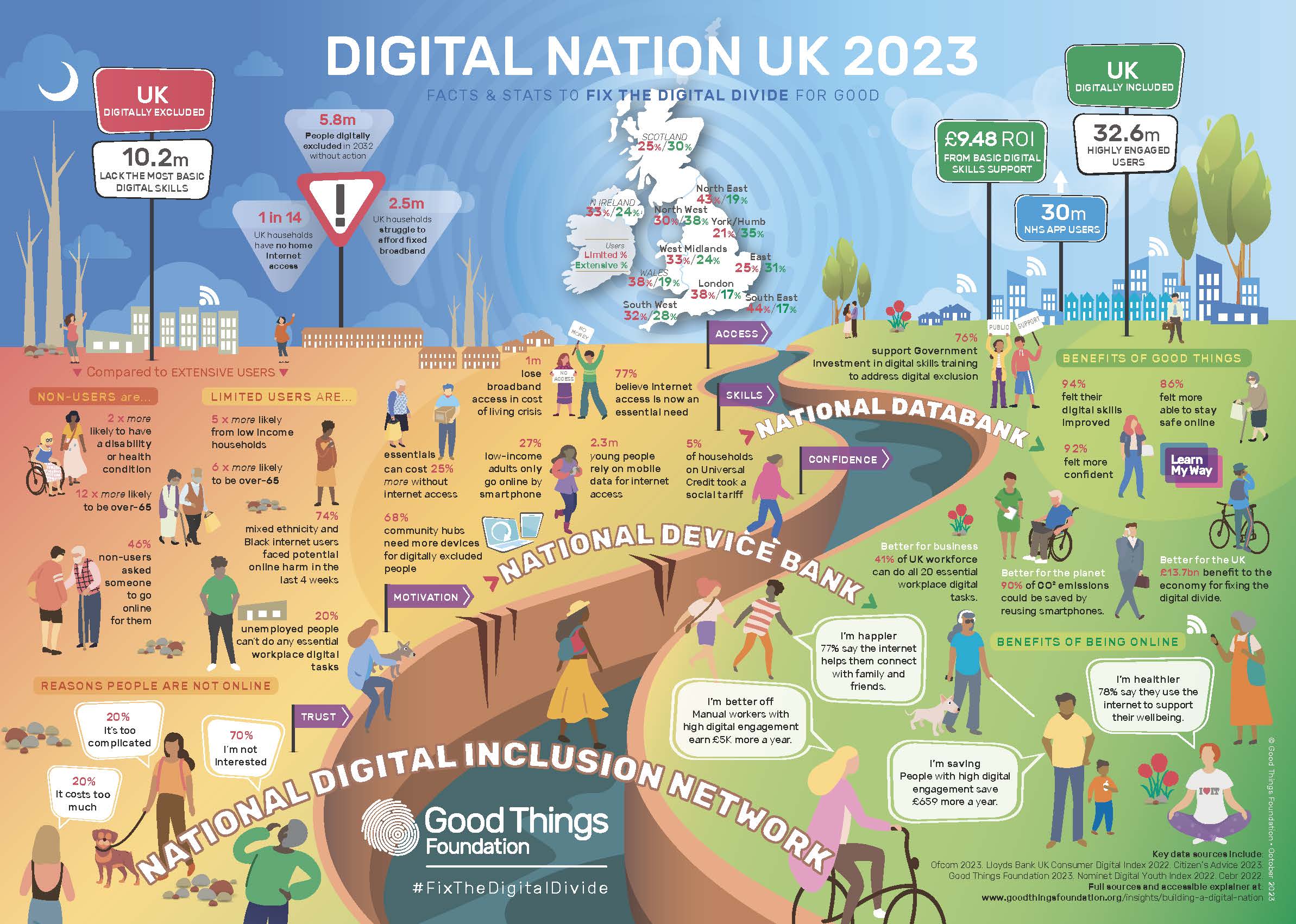

One of the key concerns we all have as the economy becomes more and more digital is data and digital exclusion. Does DSIT have a strategy in this respect? In particular, as Citizens Advice said,

“consumers faced unprecedented hikes in their monthly mobile and broadband contract prices”

as a result of mid-contract price rises. When will the Government, Ofcom or the CMA ban these?

There are considerable concerns about digital exclusion, for example regarding the switchover of voice services from copper to fibre. It is being carried out before most consumers have been switched on to full fibre infra- structure and puts vulnerable customers at risk.

There are clearly great opportunities to use AI within the creative industries, but there are also challenges, big questions over authorship and intellectual property. Many artists feel threatened, and this was the root cause of the recent Hollywood writers’ and actors’ strike. What are the IPO and government doing, beyond their consultation on licensing in this area, to secure the necessary copyright and performing right reform to protect artists from synthetic versions?

I very much echo what the noble Baroness, Lady Jones, said about misinformation during elections. We have already seen two deepfakes related to senior Front-Bench Members—shadow spokespeople—in the Commons. It is concerning that those senior politicians appear powerless to stop this.

My noble friends will deal with the Media Bill. The Minister did not talk of the pressing need for skilling and upskilling in this context. A massive skills and upskilling agenda is needed, as well as much greater diversity and inclusion in the AI workforce. We should also be celebrating Maths Week England, which I am sure the Minister will do. I look forward to the three maiden speeches and to the Minister’s winding up.

Lords call for action on Digital Exclusion

The House of Lords recently debated the Report of the Communication and Digital Committee on Digital exclusion . This is an editred version of what I said when winding up the debate.

Trying to catch up with digital developments is a never-ending process, and the theme of many noble Lords today has been that the sheer pace of change means we have to be a great deal more active in what we are doing in terms of digital inclusion than we are being currently.

Access to data and digital devices affects every aspect of our lives, including our ability to learn and work; to connect with online public services; to access necessary services, from banking, to healthcare; and to socialise and connect with the people we know and love. For those with digital access, particularly in terms of services, this has been hugely positive- as access to the full benefits of state and society has never been more flexible or convenient if you have the right skills and the right connection.

However, a great number of our citizens cannot get take advantage of these digital benefits. They lack access to devices and broadband, and mobile connectivity is a major source of data poverty and digital exclusion. This proved to be a major issue during the Covid pandemic. Of course the digital divide has not gone away subsequently—and it does not look as though it is going to any time soon.

There are new risks coming down the track, too, in the form of BT’s Digital Voice rollout. The Select Committee’s report highlighted the issues around digital exclusion. For example, it said that 1.7 million households had no broadband or mobile internet access in 2021; that 2.4 million adults were unable to complete a single basic task to get online; and that 5 million workers were likely to be acutely underskilled in basic skills by 2030. The Local Government Association’s report, The Role of Councils in Tackling Digital Exclusion, showed a very strong relationship between having fixed broadband and higher earnings and educational achievement, such as being able to work from home or for schoolwork.

To conflate two phrases that have been used today, this may be a Cinderella issue but “It’s the economy, stupid”. To borrow another phrase used by the noble Baroness, Lady Lane-Fox, we need to double down on what we are already doing. As the committee emphasised, we need an immediate improvement in government strategy and co-ordination. The Select Committee highlighted that the current digital inclusion strategy dates from 2014. They called for a new strategy, despite the Government’s reluctance. We need a new framework with national-level guidance, resources and tools that support local digital inclusion initiatives.

The current strategy seems to be bedevilled by the fact that responsibility spans several government departments. It is not clear who—if anyone—at ministerial and senior officer level has responsibility for co-ordinating the Government’s approach. Lord Foster mentioned accountability, and Lady Harding, talked about clarity around leadership. Whatever it is, we need it.

Of course, in its report, the committee stressed the need to work with local authorities. A number of noble Lords have talked today about regional action, local delivery, street-level initiatives: whatever it is, again, it needs to be at that level. As part of a properly resourced national strategy, city and county councils and community organisations need to have a key role.

The Government too should play a key role, in building inclusive digital local economies. However, it is clear that there is very little strategic guidance to local councils from central government around tackling digital exclusion. As the committee also stresses, there is a very important role for competition in broadband rollout, especially in terms of giving assurance that investors in alternative providers to the incumbents get the reassurance that their investment is going on to a level playing field. I very much hope that the Minister will affirm the Government’s commitment to those alternative providers in terms of the delivery of the infrastructure in the communications industry.

Is it not high time that we upgraded the universal service obligation? The committee devoted some attention to this and many of us have argued for this ever since it was put into statutory form. It is a wholly inadequate floor. We all welcome the introduction of social tariffs for broadband, but the question of take-up needs addressing. The take-up is desperately low at 5%. We need some form of social tariff and data voucher auto-enrolment. The DWP should work with internet service providers to create an auto-enrolment scheme that includes one or both products as part of its universal credit package. Also, of course, we should lift VAT, as the committee recommended, and Ofcom should be empowered to regulate how and where companies advertise their social tariffs.

We also need to make sure that consumers are not driven into digital exclusion by mid-contract price rises. I would very much appreciate hearing from the Minister on where we are with government and Ofcom action on this.

The committee rightly places emphasis on digital skills, which many noble Lords have talked about. These are especially important in the age of AI. We need to take action on digital literacy. The UK has a vast digital literacy skills and knowledge gap. I will not quote Full Fact’s research, but all of us are aware of the digital literacy issues. Broader digital literacy is crucial if we are to ensure that we are in the driving seat, in particular where AI is concerned. There is much good that technology can do, but we must ensure that we know who has power over our children and what values are in play when that power is exercised. This is vital for the future of our children, the proper functioning of our society and the maintenance of public trust. Since media literacy is so closely linked to digital literacy, it would be useful to hear from the Minister where Ofcom is in terms of its new duties under the Online Safety Act.

We need to go further in terms of entitlement to a broader digital citizenship. Here I commend an earlier report of the committee, Free For All? Freedom of Expression in the Digital Age. It recommended that digital citizenship should be a central part of the Government’s media literacy strategy, with proper funding. Digital education in schools should be embedded, covering both digital literacy and conduct online, aimed at promoting stability and inclusion and how that can be practised online. This should feature across subjects such as computing, PSHE and citizenship education, as recommended by the Royal Society for Public Health in its #StatusOfMind report as long ago as 2017.

Of course, we should always make sure that the Government provide an analogue alternative. We are talking about digital exclusion but, for those who are excluded and have the “fear factor”, we need to make sure and not assume that all services can be delivered digitally.

Finally, we cannot expect the Government to do it all. We need to draw on and augment our community resources; I am a particular fan of the work of the Good Things Foundation See their info graphic accompanying this) FutureDotNow, CILIP—the library and information association—and the Trussell Trust, and we have heard mention of the churches, which are really important elements of our local delivery. They need our support, and the Government’s, to carry on the brilliant work that they do.

Response to AI White paper falls short.

This is my reaction to the government's AI White Papert response which broadly follows the line taken by the White paper last year and does not take on board the widespread demand expressed during the consultation for regulatory action.

There is a gulf between the government’s hype about a bold and considered approach and leading in safe AI and reality. The fact is we are well behind the curve, responding at snails pace to fast moving technology. We are far from being bold leaders in AI . This is in contrast to the EU with its AI Act which is grappling with the here and now risks in a constructive way.

As the House of Lords Communication s and Digital Committee said in its recent report on Large Language Models there is evidence of regulatory capture by the big tech companies . The Government rather than regulating just keeps saying more research is needed in the face of clear current evidence of the risk of many uses and forms of AI. It’s all too early to think about tackling the risk in front of us. We are expected to wait until we have complete understanding and experience of the risks involved. Effectively we are being treated as guinea pigs to see what happens whilst the government taklks about the existential risks of AI instead

Further the response to the White Paper states that general purpose AI already presents considerable risks accross a range of sectors, so in effect admitting that a purely sectoral approach is not practical.

The Government has failed to move from principles to practice. Sticking to their piecemeal context specific approach approach they are not suggesting immediate regulation nor any new powers for sector regulators but, as anticipated, the setting up of a new central function (with the CDEI being subsumed into DSIT as the Responsible Technology Adoption Unit) and an AI Safety Institute to assess risk and advise on future regulation. In essence any action-without new powers- will be left to the existing regulators rather than any new horizontal duties being mandated.

Luckily others such as the EU-and even the US- contrary to many forecasts- are grasping the nettle.

My view is that

We need early risk based horizontal legislation across the sectors ensuring that standards for a proper risk management framework and impact assessment are imposed when AI systems are developed and adopted and consequences that flow when the system is assessed as high risk in terms of additional requirements to adopt standards of transparency and independent audit.

We shouldn’t just focus on existential long term risk or risk from Frontier AI, predictive AI is important too in terms of automated decision making. risk of bias and lack of traanspartency.

We should focus as well on interoperability with other jurisdictions which means working with the BSI,ISO, IEEE, OECD and others towards convergence of standards such as on Risk Managenent, Impact Assessment, Testing Audit, Design, Continous monitoring etc etc There are several existing international standards such as ISO 42001 and 42006 which are ready to be adopted.

That government needs to implant its the Algorithmic Transparency Recording Standard into our public ervices alongside risk assessment of the AI systems it uses together with a public register of AI systems in use in government. It also needs need to beef up the Data Protection Bill in terms of rights of data subjects relative to Automated Decision Making rather than water them down and retain and extend the Data Protection Impact Assessment and DPO for use in AI regulation.

Also and very importantly in the light of recent news from the IPO. I hope the Gov will take strong note of the House of Lords report on the use of copyrighted works by LLM’s. The government has adopted its usual approach of relying on a voluntary approach. But it is clear that this is simply is not going to work. The ball is back in its court and It needs to act decisively to make sure that these works are not ingested into training LLM’s without any return to rightsholders.

In summary. We need consistency certainty and convergence if developers, adopters and consumers are going to safely innovate and take advantage of AI advances and currently the UK is not delivering the prospect of any of these.

Lord C-J: We Must Regulate AI before AGI arrives

The House of Lords recently debated the development of advanced artificial intelligence, associated risks and approaches to regulation

This is an edited version of what I said when winding up the debate

The narrative around AI swirls back and forwards in this age of generative AI, to an even greater degree than when our AI Select Committee conducted its inquiry in 2017-18—it is very good to see a number of members of that committee here today. For instance, in March more than 1,000 technologists called for a moratorium on AI development. This month, another 1,000 technologists said that AI is a force for good. We need to separate the hype from the reality to an even greater extent.

Our Prime Minister seems to oscillate between various narratives. One month we have an AI governance White Paper suggesting an almost entirely voluntary approach to regulation, and then shortly thereafter he talks about AI as an existential risk. He wants the UK to be a global hub for AI and a world leader in AI safety, with a summit later this year.

I will not dwell too much on the definition of AI. The fact is that the EU and OECD definitions are now widely accepted, as is the latter’s classification framework. We need to decide whether it is tool, partner or competitor. We heard today of the many opportunities AI presents to transform many aspects of people’s lives for the better, from healthcare to scientific research, education, trade, agriculture and meeting many of the sustainable development goals. There may be gains in productivity, or in the detection of crime.

However, AI also clearly presents major risks, especially reflecting and exacerbating social prejudices and bias, the misuse of personal data and undermining the right to privacy, such as in the use of live facial recognition technology. We have the spreading of misinformation, the so-called hallucinations of large language models and the creation of deepfakes and hyper-realistic sexual abuse imagery, as the NSPCC has highlighted, all potentially exacerbated by new open-source large language models that are coming. We have a Select Committee looking at the dilemmas posed by lethal autonomous weapons..We have major threats to national security. There is the question of overdependence on artificial intelligence—a rather new but very clearly present risk for the future.

We must have an approach to AI that augments jobs as far as possible and equips people with the skills they need, whether to use new technology or to create it. We should go further on a massive skills and upskilling agenda and much greater diversity and inclusion in the AI workforce. We must enable innovators and entrepreneurs to experiment, while taking on concentrations of power. We must make sure that they do not stifle and limit choice for consumers and hamper progress. We need to tackle the issues of access to semiconductors, computing power and the datasets necessary to develop large language generative AI models.

However, the key and most pressing challenge is to build public trust, as we heard from so many noble Lords, and ensure that new technology is developed and deployed ethically, so that it respects people’s fundamental rights, including the rights to privacy and non-discrimination, and so that it enhances rather than substitutes for human creativity and endeavour. Explainability is key, as the noble Lord, Lord Holmes, said. I entirely agree with the right reverend Prelate that we need to make sure that we adopt these high-level ethical principles, but I do not believe that is enough. A long gestation period of national AI policy-making has ended up producing a minimal proposal for:

“A pro-innovation approach to AI regulation”,

which, in substance, will amount to toothless exhortation by sectoral regulators to follow ethical principles and a complete failure to regulate AI development where there is no regulator.

Much of the White Paper’s diagnosis of the risks and opportunities of AI is correct. It emphasises the need for public trust and sets out the attendant risks, but the actual governance prescription falls far short and goes nowhere in ensuring where the benefit of AI should be distributed. There is no recognition that different forms of AI are technologies that need a comprehensive cross-sectoral approach to ensure that they are transparent, explainable, accurate and free of bias, whether they are in a regulated or an unregulated sector. Business needs clear central co-ordination and oversight, not a patchwork of regulation. Existing coverage by legal duties is very patchy: bias may be covered by the Equality Act and data issues by our data protection laws but, for example, there is no existing obligation for ethics by design for transparency, explainability and accountability, and liability for the performance of AI systems is very unclear.

We need to be clear, above all, as organisations such as techUK are, that regulation is not necessarily the enemy of innovation. In fact, it can be the stimulus and the key to gaining and retaining public trust around AI and its adoption, so that we can realise the benefits and minimise the risks. What I believe is needed is a combination of risk-based, cross-sectoral regulation, combined with specific regulation in sectors such as financial services, underpinned by common, trustworthy standards of testing, risk and impact assessment, audit and monitoring. We need, as far as possible, to ensure international convergence, as we heard from the noble Lord, Lord Rees, and interoperability of these standards of AI systems, and to move towards common IP treatment of AI products.

We have world-beating AI researchers and developers. We need to support their international contribution, not fool them that they can operate in isolation. If they have any international ambitions, they will have to decide to conform to EU requirements under the forthcoming AI legislation and ensure that they avoid liability in the US by adopting the AI risk management standards being set by the National Institute of Standards and Technology. Can the Minister tell us what the next steps will be, following the White Paper? When will the global summit be held? What is the AI task force designed to do and how? Does he agree that international convergence on standards is necessary and achievable? Does he agree that we need to regulate before the advent of artificial general intelligence?

As for the creative industries, there are clearly great opportunities in relation to the use of AI. Many sectors already use the technology in a variety of ways to enhance their creativity and make it easier for the public to discover new content.

But there are also big questions over authorship and intellectual property, and many artists feel threatened. Responsible AI developers seek to license content which will bring in valuable income. However, many of the large language model developers seem to believe that they do not need to seek permission to ingest content. What discussion has the Minister, or other Ministers, had with these large language model firms in relation to their responsibilities for copyright law? Can he also make a clear statement that the UK Government believe that the ingestion of content requires permission from rights holders, and that, should permission be granted, licences should be sought and paid for? Will he also be able to update us on the code of practice process in relation to text and data-mining licensing, following the Government’s decision to shelve changes to the exemption and the consultation that the Intellectual Property Office has been undertaking?

There are many other issues relating to performing rights, copying of actors, musicians, artists and other creators’ images, voices, likeness, styles and attributes. These are at the root of the Hollywood actors and screenwriters’ strike as well as campaigns here from the Writers’ Guild of Great Britain and from Equity. We need to ensure that creators and artists derive the full benefit of technology, such as AI-made performance synthetisation and streaming. I very much hope that the Minister can comment on that as well.

We have only scratched the surface in tackling the AI governance issues in this excellent debate, but I hope that the Minister’s reply can assure us that the Government are moving forward at pace on this and will ensure that a full debate on AI governance goes forward.

A long way off a Science and Technology Superpower

This is an extended version of a speech I made in a recent debate held in the Lords on a Report from the Science and Technology Committee "Science and technology superpower”: more than a slogan? which extensively discussed government policy in science and technology

The Committee's comprehensive report despite being nearly a year old still has great currency and relevance. Its conclusions are as valid as they were a year ago

Sir James Dyson has described the government’s science superpower ambition as a political slogan. Grandiose language about Global Britain from the Integrated review-and stated ambition to be a Science Superpower by 2030 -or is it a Science and Technology Superpower, is clearly overblown and detracts from what needs to be done. .

UCL research has demonstrated that if measured by authorship, the UK accounts for about only about 13 per cent of the top 1 per cent of the most highly cited work across all research fields

We have had a proliferation of strategies as the Committee noted.

- R&D Roadmap,

- the Innovation Strategy,

- the Life Sciences Vision

- the People and Culture Strategy,

- and the National Space Strategy

- National Quantum strategy.

- National semi conductor strategy

- A taskforce on foundation models is being set up

We have had a whole series of attempts at creating a strategy in various areas but with what follow up and delivery?

As well as the strategies I have mentioned we have had a series of reviews

- Professor Dame Angela McLean’s Pro-innovation Regulation of Technologies Review Life Sciences

- The Vallance Review of Pro-innovation Regulation of Digital Technologies

- Independent Review of Research Bureaucracy the Review by Professor Adam Tickell

- The independent review of UKRI by Sir David Grant

- We now have the Independent Review of The Future of Compute announced

- And now the Chancellors recent Life Sciences package

Where’s the result. What will the KPI’s be ? What is the shelf life of these reviews and where is the practical implementation?

T

T

Take for example the Life Sciences Vision launched back in 2021

Dame Kate Bingham is quoted as believing the Vaccine scheme legacy has been ‘squandered’.

Professor Adrian Hill, director of the Jenner Institute, which was responsible for the Oxford Covid vaccine, has said that the recent loss of the Vaccines Manufacturing and Innovation Centre (VMIC) in Oxfordshire, which had been created to respond to outbreaks, showed that the UK had been going backwards since the coronavirus pandemic.

Business investment is crucial. And no where more than in the life sciences sector.

Lord Hunt of Kings Heath highlighted issues relating to investment in the sector two weeks ago in his regret motion on the Branded Health Service Medicines (Costs) (Amendment) Regulations 2023. All the levers to create incentives for the development of new medicines are under government control.

As his motion noted the UK’s share of global pharmaceutical R&D has fallen by over one-third between 2012 and 2020. He argued rightly that both the voluntary and statutory pricing schemes for new medicines schemes are becoming a major impediment to future investment in the UK. We seem to be treating the pharma industry as some kind of golden goose

Despite the government’s Life Sciences Vision we see Eli lilley pulling investment on laboratory space in London because the UK “does not invite inward investment at this time and Astra Zeneca has decided to build its next plant in Ireland because of the U.K.’s “discouraging” tax rate.

Eli Lilly, the American multinational, had been looking to investing laboratory space in the UK, but it has put its plans for London on hold because, it said,”

The excellent O’Shaunessy report on clinical trials is all very well but if there is no commercial incentive to develop and launch. new medicines here why should pharma companies want to engage in clinical trials here? The Chancellor’s growth package for life Sciences announced on the 25th May fails to tackle this crucial aspect.

In other sectors

- The CEO OF Johnson Matthey, a chemicals company with a leading position in green hydrogen,has said the UK is at risk of losing its lead thanks to a policy vacuum.

- The co founder of ARM, Britain’s biggest semiconductor company, blames our “technologically illiterate political elite” and “Brexit idiocy” for the country’s paltry share of the chips market.

On these benches however we do welcome the creation of the new department and I welcome the launch of the Framework for Science and Technology to inform the work of the department to 2030.

There are 10 key objectives:

- identifying, pursuing and achieving strategic advantage in the technologies that are most critical to achieving UK objectives

- showcasing the UK’s S&T strengths and ambitions at home and abroad to attract talent, investment and boost our global influence

- boosting private and public investment in research and development for economic growth and better productivity building on the UK’s already enviable talent and skills base

- financing innovative science and technology start-ups and companies

- capitalising on the UK government’s buying power to boost innovation and growth through public sector procurement

- shaping the global science and tech landscape through strategic international engagement, diplomacy and partnerships

- ensuring researchers have access to the best physical and digital infrastructure for R&D that attracts talent, investment and discoveries

- leveraging post-Brexit freedoms to create world-leading pro-innovation regulation and influence global technical standards

- creating a pro-innovation culture throughout the UK’s public sector to improve the way our public services run

What are the key priority outcomes? What concrete plans for delivery lie behind this? And does this explicitly supersede all of the visions and strategies that have gone before?

As the Committee said in its report

“The government should set out what it wants to achieve in each of the broad areas of science and technology it has identified, with a clear implementation plan including measurable targets and key outcomes in priority areas, and an explanation of how they will be delivered.

And - The government should consolidate existing sector-specific strategies into that implementation plan”

Also in terms of vital cross departmental working, joining up government on Science and Technology policy what is the role of the eNational Science and Technology Council and what are its key priorities?

This applies especially with Home office on visas for researchers if the UK wants to be a world leader in science and technology, it needs to be world leading in its approach to researcher mobility.

The UK’s upfront costs for work and study visas are up to 6 times higher than the average fees of other leading science nations. The application process for UK visitor visas is also bureaucratic and unwieldy, and the UK has one of the highest visitor visa refusal rates.

There are really important systemic issues which should be a top priority for resolution by the new Department.

Published at the same time as the DSIT framework was the independent review of the UK’s research, development and innovation landscape by Sir Paul Nurse,

Sir Paul calculated our spending around 2.5% of GDP. But he still concluded that funding, particularly provided by government, was limited, and below that of other competitive nations such as the Germans, South Koreans and US.

There is the particular and urgent problem of Horizon and the uncertainty around our membership. The absolute top priority for UK R&D should be rejoining Horizon.We need access to collaboration across the EU where pre Brexit we disproportionately benefited from Horizon’s 95 billion euro budget. We need a clear commitment to negotiate re entry. What is the position now nearly two months after the Prime Minister’s letter to Sir Adrian Smith of 14th April assuring him about our intentions on Horizon?

Iceland and Israel, Norway and New Zealand, and Turkey and Tunisia, are all already part of Horizon, as is Ukraine. Why not us?

The two universities of Oxford and Cambridge once received more than £130m a year from European research programmes but are now getting only £1m annually between them.

Meanwhile Britain has fallen behind Russia, Italy and Finland in the world league table for computing power., Britain has slipped from third in the rankings in 2005 to seventh now, according to the Independent Review of The Future of Compute.

The way the UK delivers and supports research is also “not optimal,” the Nurse review said said. Of course research academics here will know the frustrations of the bureaucracy associated with applying for U.K. research grants. As the Tickell review found there are issues with bureaucracy around research and development funding.

EPSRC is according to researchers admirable in how it oversees the research grants. Innovate UK with its Knowledge Transfer Partnerships however has a far more risk averse and bureaucratic approach just at the point where a bit of commercial risk taking is needed. We need to benchmark to ensure the least bureaucratic processes. I am told that the European Innovation Council is a model.

ARIA was specifically designed to avoid bureaucracy as we said during the passage of the bill but why weren't all of UKRI processes remodelled rather than creating a new entity? Incidentally do we have any more information now about their key research and development projects?

The government doesn’t really have a clear idea of the role of university research either. The Research Excellence Framework has the perverse incentive of discouraging cooperation. We should be encouraging strategic partnerships in research especially internationally as the Committee concludes

Commercialisation is a crucial aspect linking R&D to economic growth. This in turn means the need for a consistent industrial strategy with the right commercial incentives and an understanding of the value of intangible assets such as IP and data. In this context Catapults are performing a brilliant job but need a bigger role and more resource as the Committee have recommended in a previous report.

Of course we are all much relieved for the tech sector by the rescue of Silicon Valley bank by HSBC. But the popularity of a US bank in a way demonstrates that we may have a growing start up culture but scale up is still a problem as Sir Patrick Vallance said in his evidence to the Commons Science Innovation and Technology Committee last month.

The US is still preferred for listing by tech companies over London as we’ve seen with U.K. based ARM seeking a listing in the US.

There are aspects of wider government policy where there is no perceived benefit to U.K. science and technology

- Reduction of of R & D tax credits for SME’s

- Raising corporation tax to 25%

One of the long outstanding issues is reform to assist with derisking so that pension funds can play their part in helping grow tech companies also mentioned by Sir Patrick as an important aspiration and I see that finally something is stirring from the British Business Bank with the LIFTS initiative.

There are many other elements that need to be covered for a viable Science and technology strategy.

Regulatory Divergence

Even where the government thinks it is being innovation friendly it is clouded by the desire to be divergent from the EU to get some kind of Brexit dividend.. It talks about pro innovation regulation but Contrary to the advice of virtually every prominent technologist the AI governance white paper only concerns itself with voluntary sectoral regulation and leaves it to the regulators in particular sectors not proposing a broader risk based regulatory regime like that proposed under the EU’s AIA or indeed by the US Administration’s Blue Print for AI. Maybe that will change after the Prime Minister’s ’s visit to the US this week.

Changes to our Data Protection regime proposed by the Data Protection and Digital Information bill no 2 which go further than just clarifying the retained GDPR and of course make changes to the ICO’s structure could leads to a lack of EU data adequacy and new compliance costs..

We have waited forever for competition law in the digital space to be reformed through a Digital Markets Act and Digital Markets Unit to be put on a statutory basis and now we have a bill which gives inadequate powers to the CMA to act swiftly to ensure competition. In the meantime the so called hyperscalers gain greater and greater influence over the development of new technologies such as AI.

Diversity

In the the wise words of the British Science Association we must ensure the opportunities and benefits are equitable in any future science strategy, not only to shape a world-leading science industry, but to sustain progress and successfully bring out the potential of people from all communities, backgrounds and regions. Britain cannot be a superpower if parts of society are not welcomed and able to contribute to science research and innovation. Making science inclusive – from classroom to career – is essential to establishing a globally competitive workforce.

Mathematics

As regards mathematics, where is the £300m promised additional funding for mathematical science research as announced in January 2020 or the National Academy for the Mathematical Sciences or a National Strategy for Mathematics.

So much to do for the new Department. I hope that the Minister and his colleagues in DSIT will rise to the challenge

The long-awaited AI Governance White Paper falls far short of what is needed

The Government's AI Governance White Paper : A Pro Innovation to AI Regulation came out in late March . This is my take on it published by Politics Home.

Last week over a thousand leading technologists wrote an open letter pointing out the “profound risks to society and humanity” of artificial intelligence (AI) systems with human-competitive intelligence.

They called inter alia for AI developers to “work with policymakers to dramatically accelerate development of robust AI governance systems”. So, it is ironic that our government published proposals for AI governance barely worthy of the name.

This at a time when there is huge interest and apprehension of the capabilities of new AI, such as ChatGPT and GPT-4, has never been higher.

Business needs a clear central oversight and compliance mechanism, not a patchwork of regulation

A long gestation period of national AI policy making, which started so well back in 2017 with the Hall-Pesenti Review and the creation of the Centre for Data Ethics and Innovation, the Office for AI and the AI Council, has ended up producing a minimal proposal for “a pro-innovation approach to AI regulation”. In substance this amounts to toothless exhortation by sectoral regulators to follow ethical principles and a complete failure to oversee AI development with no new regulator.

Much of the white paper’s diagnosis is correct in terms of the risks and opportunities of AI. It emphasizes the need for public trust and sets out the attendant risks and adopts a realistic approach to the definition of AI. It makes the case for central coordination and even admits that this is what business has asked for, but the actual governance prescription falls far short.

The suggested form of governance of AI is a set of principles and exhortations which various regulators – with no lead regulator – are being asked to interpret in a range of sectors under the expectation that they will somehow join the dots between them. They will have no new enforcement powers. There may be standards for developers and adopters but no obligation to adopt them.

There is no recognition that the different forms of AI are technologies that need a comprehensive cross-sectoral approach to ensure that they are transparent, explainable, accurate and free from bias whether they are in an existing regulated or unregulated sector. Business needs a clear central oversight and compliance mechanism, not a patchwork of regulation. The government’s proposals will not meet its objective of ensuring public trust in AI technology.

The government seems intent on going forward entirely without regard to what any other country is doing in the belief that somehow this is pro innovation. It does not recognise the need for our developers to be confident they can exploit their technology internationally.

Far from being world leading or turbocharging growth in practice, our developers and adopters will be forced to look over their shoulder at other more rigorous jurisdictions. If they have any international ambitions they will have to conform to European Union requirements under the forthcoming AI Act and ensure they avoid liability in the United States by adopting the AI risk management standards being set by the National Institute for Standards and Technology. Once again government ideology is militating against the interests of our business and science and technology communities.

What is needed – which I sincerely hope the Science and Technology Committee will recommend in its inquiry into AI governance – is a combination of risk-based, cross-sectoral regulation combined with specific regulation in sectors, such as financial services, underpinned by common trustworthy international standards of risk assessment, audit and monitoring.

We have world beating AI researchers and developers. We need to support their international contribution, not fool them they can operate in isolation.

Lord Clement-Jones, Liberal Democrat peer and spokesperson for Science Innovation and Technology in the Lords and co-founder of the All Party Parliamentary Group on AI.

Common Ethics and Standards and Compatible Regulation will let responsible AI Flourish

This is the talk I gave at the opening of the excellent Portraits of AI Leadership Conference organized by Ramsay Brown of the The AI Responsibility Lab based in LA and Dr Julian Huppert Director of the Intellectul Forum at Jesus College Cambridge

It’s a pleasure to help kick off proceedings today.

Now you may well ask why a lawyer like myself fell among tech experts like yourselves

In 2016 as a digital spokesperson at an industry and Parliament trust breakfast I realized that the level of parliamentary understanding was incredibly low so with Stephen Metcalfe MP then the chair of Science and Technology Select Committee I founded the All Party Parliamentary Group on Artificial Intelligence.The APPG is dedicated to informing parliamentarians about developments and creating a community of interest around future policy regarding AI, its adoption use and regulation.