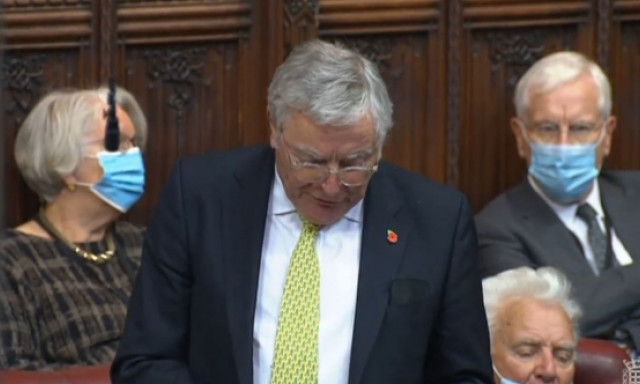

Launch of AI Landscape Overview: Lord C-J on AI Regulation

It was good to launch the new Artificial Intelligence Industry in the the UK Landscape Overview 2021: Companies, Investors, Influencers and Trends with the authors from Deep Knowledge Analytica nd Big Innovation Centre and my APPG AI Co Chair Stephen Metcalfe MP, Professor Stuart Risssell the Reith lecturer , Charles Kerrigan of CMS and Dr Scott Steedman of the BSI

Here is the full report online

https://mindmaps.innovationeye.com/reports/ai-in-uk

And here is what I said about AI Regulation at the launch:

A little under 5 years ago we started work on the AI Select Committee enquiry that led to our Report AI in the UK: Ready Willing and Able? The Hall/Pesenti Review of 2017 came at around the same time.

Since then many great institutions have played a positive role in the development of ethical AI. Some are newish like the Centre for Data Ethics and Innovation, the AI Council and the Office for AI; others are established regulators such as the ICO, Ofcom, the Financial Conduct Authority and the CMA whichhave put together a new Digital Regulators Cooperation Forum to pool expertise in this field. This role includes sandboxing and input from a variety of expert institutes on areas such as risk assessment, audit data trusts and standards such as the Turing, Open Data, the Ada Lovelace, the OII and the British Standards Institute. Our Intellectual Property Office too is currently grappling with issues relating to IP created by AI .

The publication of National AI strategy this Autumn is a good time to take stock of where we are heading on regulation. We need to be clear above all , as organisations such as techUK are, that regulation is not the enemy of innovation, it can in fact be the stimulus and be the key to gaining and retaining public trust around AI and its adoption so we can realise the benefits and minimise the risks.

I have personally just completed a very intense examination of the Government’s proposals on online safety where many of the concerns derive from the power of the algorithm in targetting messages and amplifying them. The essence of our recommendations revolves around safety by design and risk assessment.

As is evident from the work internationally by the Council of Europe, the OECD, UNESCO, the Global Partnership on AI and the EU with its proposal for an AI Act, in the UK we need to move forward with proposals for a risk based regulatory framework which I hope will be contained in the forthcoming AI Governance White Paper.

Some of the signs are good. The National AI strategy accepts the fact that we need to prepare for AGI and in the National Strategy too they talk too about

- public trust and the need for trustworthy AI,

- that Government should set an example,

- the need for international standards and an ecosystem of AI assurance tools

and in fact the Government have recently produced a set of Transparency Standards for AI in the public sector.

On the other hand

- Despite little appetite in the business or the research communities they are consulting on major changes to the GDPR post Brexit in particular the suggestion that we get rid of Article 22 which is the one bit of the GDPR dealing with a human in the loop and and not requiring firms to have a DPO or DPIAs

- Most recently after a year’s work by the Council of Europe’s Ad Hoc Committee on the elements of a legal framework on AI at the very last minute the Government put in a reservation saying they couldn’t yet support the document going to the Council because more gap analysis was needed despite extensive work on this in the feasibility study

- We also have no settled regulation for intrusive AI technology such as live facial recognition

- Above all It is not yet clear whether they are still wedded to sectoral regulation rather than horizontal

So I hope that when the White Paper does emerge that there is recognition that we need a considerable degree of convergence between ourselves the EU and members of the COE in particular, for the benefit of our developers and cross border business that recognizes that a risk based form of horizontal regulation is required which operationalizes the common ethical values we have all come to accept such as the OECD principles.

Above all this means agreeing on standards for risk and impact assessments alongside tools for audit and continuous monitoring for higher risk applications.That way I believe we can draw the US too into the fold as well.

This of course not to mention the whole Defence and Lethal Autonomous Systems space, the subject of Stuart Russell’s Second Reith Lecture which despite the promise of a Defence AI Strategy is another and much more depressing story!

Lord C-J : Lords Diary in the House Magazine

From the House Magazine

https://www.politicshome.com/thehouse/article/lords-diary-lord-clement-jones

Down the corridor in the Commons, early November was dominated by No 10’s Owen Paterson U-turn and in Glasgow by events at COP26 – but for me it was an opportunity to raise a number of issues relevant to innovation and the development of technology and the future of our creative industries. It started with the great news that Queen Mary University of London, whose council I chair, after many trials and tribulations, has agreed a property deal next to our Whitechapel campus with the Department of Health and Social Care. It paves the way for the development, with Barts Life Sciences, of a major research centre within a new UK Whitechapel Life Sciences Cluster.

And tied in with the theme of tech innovation, a couple of welcome in-person industry events too – the techUK dinner and the Institute of Chartered Accountants in England and Wales’ annual reception – where a new initiative, to increase investment in the UK’s high-growth companies, was launched. Guest speaker Lord Willetts made the point about the UK’s great R&D but relatively poor track record in commercialisation.

All highly relevant to a late session on the second reading of the bill setting up the new ARIA (Advanced Research and Development Agency). A cautiously positive reception, as most speakers were uncertain where it fits in our R&D and innovation landscape. It may be designed to be free of bureaucracy, but the question arises, how does that reflect on the relatively recent UK Research and Innovation infrastructure?

The risks posed by some new technology were highlighted in my oral question the day before on whether the UK will join in steps to limit Lethal Autonomous Weapons. The MoD still seems to be hiding behind the lack of an agreed international definition whilst insisting, not very reassuringly, that we do not use systems that employ lethal force without “context-appropriate human involvement”.

Other digital harms were to the fore with successive sessions of the Joint Committee on the Draft Online Safety Bill. The first, taking evidence from Ofcom CEO Melanie Dawes; in command of her material, but questions still remain over whether Ofcom will have the powers and independence it needs. Then a round table looking at how and whether the bill protects press freedom, and our final evidence session with Nadine Dorries, the new secretary of state, in listening mode and hugely committed to effectively eradicating harms online. There are many improvements to the bill needed but we are up against the necessity for early implementation.

"The news broke that facial recognition software in cashless payment systems had been adopted in nine Ayrshire schools"

And, so, the Lib Dem debate day. The first on government policy and spending on the creative sector in the United Kingdom superbly introduced by colleague Lynne Featherstone. I focused on a number of sectors under threat: independent TV and film producers, publishers, from potential changes to exhaustion of copyright, authors, from the closure of libraries and the aftermath of Covid, and the music industry, from lockdowns and post Brexit inability to touring in the EU. The overarching theme of the debate so relevant to innovation and our tech sector was that creativity is important not just in the cultural sector but across the whole economy – a point it seems well taken by the minister, Stephen Parkinson, in his new role, with a meeting with his (also new) ministerial counterpart in the Department for Education in the offing.

Back to technology risk, in the next debate, about the use of facial and other biometric recognition technologies in schools. A little over two weeks before, the news broke that facial recognition software in cashless payment systems had been adopted in nine Ayrshire schools. Its introduction has been temporarily paused, and the Information Commissioner’s Office is now producing a report, but it is clear that current regulation is inadequate.

Quite a week. It ends with a welcome unwinding over a Friday evening negroni and excellent Italian meal, with close family, some not seen in person for two years!

Government refuses to rule out development of lethal autonomous weapons

Here is UNA-UK's Write up of a recent oral question I asked about the status of UK negotiations

During a parliamentary debate in the House of Lords, the UK Government repeatedly refused to rule out the possibility that the UK may deploy lethal autonomous weapons in the future.

At the dedicated discussion on autonomous weapons (also known as “killer robots”), members of the Liberal Democrats, Labour and Conservative parties all expressed concern around the development of autonomous weapons. Several parliamentarians, including Lord Coaker speaking for Labour and Baroness Smith speaking for the Liberal Democrats, asked the UK to unequivocally state that there will always be a human in the loop when decisions over the use of lethal force are taken. Responding to these calls, defence minister Baroness Goldie simply stated that the “UK does not use systems that employ lethal force without context appropriate human involvement”.

This formulation, which was used twice by the Minister, offers less reassurance over the UK’s possible future use of these weapons than the UK’s previous position that Britain “does not possess fully autonomous weapon systems and has no intention of developing them”.

The debate was triggered by Lord Clement-Jones, former chair of the Lords Artificial Intelligence Committee and member of the UK Campaign to Stop Killer Robots’s parliamentary network, who described the Minister’s unwillingness to rule out lethal autonomous weapons as “disappointing”. He went on to describe the UK’s refusal to support calls for a legally binding treaty on this issue as “at odds with almost 70 countries, thousands of scientists [...] and the UN Secretary-General”.

Former First Sea Lord, Lord West, raised that alarm that “nations are sleepwalking into disaster...engineers are making autonomous drones the size of my hand that have facial recognition and can carry a small, shaped charge and they will kill a person”, mentioning that thousands of such weapons could be unleashed on a city to “horrifying” effect.

Speaking from the Shadow Front Bench, Lord Coaker asked for an unequivocal commitment from the Government that there will “always be human oversight when it comes to AI [...] involvement in any decision about the lethal use of force”. This builds on Labour’s position articulated by Shadow Defence Secretary, John Healey MP, in his September 2021 conference speech where he stated that a Labour Government would “lead moves in the UN to negotiate new multilateral arms controls and rules of conflict for space, cyber and AI.”

Former Defence Secretary, Lord Browne, asked for the UK to publicly reaffirm its commitment to ethical AI and questioned the Minister on the MOD’s forthcoming Strategy on AI. Minister Goldie explained that the MOD’s planned Defence AI Strategy was slated for Autumn while conceding that Autumn “had pretty well come and gone” adding that the Strategy would be published “in early course”. Conservative MP Lord Holmes asked a related question on the need for public engagement and consultation on the UK’s approach to AI across sectors. UNA-UK agrees with this imperative for public consultation, and was concerned to learn through an FOI request that “the MOD has carried out no formal public consultations or calls for evidence on the subject of military AI ethics since 2019”.

Today’s activity in the House of Lords follows similar calls made in December 2020 in the House of Commons, when another member of our parliamentary network: the SNP’s spokesperson on foreign affairs Alyn Smith MP made a powerful call for the UK to support a ban on lethal autonomous weapons.

Next steps

On 2 December 2021, the Group of Governmental Experts to the Convention on Conventional Weapons (CCW) will decide on whether to proceed with negotiations to establish a new international treaty to regulate and establish limitations on autonomous weapons systems. So far nearly 70 states have called for a new, legally binding framework to address autonomy in weapons systems. However, following almost 8 years of talks at the UN, progress has been stalled largely due to the stance of a small number of states, including the United Kingdom, who regard the creation of a new international law as premature.

Growing momentum in the UK on this issue is not confined to parliament. Ahead of the UN talks, the UK campaign will release a paper voicing concerns identified by members of the tech community as well as a compendium of research by our junior fellows showing that the ethical framework around which research in UK universities is proceeding is not fit for purpose (early findings from the research has been published here and related research from the Cambridge Tech and Society group can be found here).

The UK Campaign to Stop Killer Robots, a coalition of NGOs, academics and tech experts, believes that UK plans for significant investments in military AI and autonomy -- as announced in the UK Integrated Review of Security, Defence, Development and Foreign Policy-- must be accompanied by a commitment to work internationally to upgrade arms control treaties to ensure that human rights, ethical and moral standards are retained.

While we welcome the assertion made in the Integrated Review that the “UK remains at the forefront of the rapidly- evolving debate on responsible development and use of AI and Autonomy, working with liberal- democratic partners to shape international legal, ethical & regulatory norms & standards" we are concerned that urgency is missing from the UK’s response to this issue. We hope the growing chorus of voices calling for action will help convince the UK to support the UN Secretary-General’s appeal for states to develop a new, binding treaty to address the urgent threats posed by lethal autonomous weapons systems at the critical UN meeting in December 2021.

Lord C-J: We urgently need to tighten data protection laws to protect children from facial recognition in schools

From the House Live November 2021

A little over two weeks ago, the news broke that facial recognition software in cashless payment systems – piloted in a Gateshead School last summer – had been adopted in nine Ayrshire schools. It is now clear that this software is becoming widely adopted, both sides of the border, with 27 schools already using it in England and another 35 or so in the pipeline.

Its use has been “temporarily paused” by North Ayrshire council after objections from privacy campaigners and an intervention from the Information Commissioner’s Office, but this is an extraordinary use of children’s biometric data for this purpose when there are so many other alternatives to cashless payment available.

"We seem to be conditioning society to accept biometric technologies in areas that have nothing to do with national security or crime prevention"

It is clear from the surveys and evidence to the Ada Lovelace Institute, which has an ongoing Ryder review of the governance of biometric data, that the public already has strong concerns about the use of this technology. But we seem to be conditioning society to accept biometric and surveillance technologies in areas that have nothing to do with national security or crime prevention and detection.

The Department for Education (DfE) issued guidance in 2018 on the Protection of Freedoms Act 2012, which makes provision for the protection of biometric information of children in schools and the rights of parents and children as regards participation. But it seems that the DfE has no data on the use of biometrics in schools. It seems there are no compliance mechanisms to ensure schools observe the Act.

There is also the broader question, under General Data Protection Regulation (GDPR), as to whether biometrics can be used at all, given the age groups involved. The digital rights group Defend Digital Me contends, “no consent can be freely given when the power imbalance with the authority is such that it makes it hard to refuse”. It seems that children as young as 14 may have been asked for their consent.

The Scottish First Minister, despite saying that “facial recognition technologies in schools don’t appear to me to be proportionate or necessary”, went on to say that schools should carry out a privacy impact assessment and consult pupils and parents.

But this does not go far enough. We should be firmly drawing a line against this. It is totally disproportionate and unnecessary. In some jurisdictions-New York, France and Sweden its use in schools has already been banned or severely limited.

This is however a particularly worrying example of the way that public authorities are combining the use of biometric data with AI systems without proper regard for ethical principles.

Despite the R (Bridges) v Chief Constable of South Wales Police & Information Commissioner case (2020), the Home Office and the police have driven ahead with the adoption of live facial recognition technology. But as the Ada Lovelace Institute and Big Brother Watch have urged – and the Commons Science and Technology Committee in 2019 recommended – there should be a voluntary pause on the sale and use of live facial recognition technology to allow public engagement and consultation to take place.

In their response to the Select Committee’s call, the government insisted that there is already a comprehensive legal framework in place – which they are taking measures to improve. Given the increasing danger of damage to public trust, the government should rethink its complacent response.

The capture of biometric data and use of LFR in schools is a highly sensitive area. We should not be using children as guinea pigs.

I hope the Information Commissioner's Office’s (ICO’s) report will be completed as a matter of urgency. But we urgently need to tighten our data protection laws to ensure that children under the age of 18 are much more comprehensively protected from the use of facial recognition technology than they are at present.

Lord C-J Comments on National AI Strategy

The UK Government has recently unveiled its National AI Strategy and promised a White Paper on AI Governance and regulation in the near future.

MELISSA HEIKKILÄ at Politico's AI :Decoded newsletter covered my comments

https://www.politico.eu/article/uk-charts-post-brexit-path-with-ai-strategy/

UK charts post-Brexit path with AI strategy

Digital minister says the government’s plan is to keep regulation ‘at a minimum.’

The U.K. wants the world to know that unlike in Brussels, it will not bother AI innovators with regulatory drama.

While the EU frets about risky AI and product safety, the U.K.'s AI strategy, unveiled on Wednesday, promised to create a “pro-innovation” environment that will make the country an attractive place to develop and deploy artificial intelligence technologies, all the while keeping regulation “to a minimum,” according to digital minister Chris Philp.

The U.K.'s strategy, which markedly contrasts the EU's own AI proposed rules, indicates that it's embracing the freedom that comes from not being tied to Brussels, and that it's keen to ensure that freedom delivers it an economic boost.

In the strategy, the country sets outs how the U.K. will invest in AI applications and help other industries integrate artificial intelligence into their operations. Absent from the strategy are its plans on how to regulate the tech, which has already demonstrated potential harms, like the exams scandal last year in which an algorithm downgraded students' predicted grades.

The government will present its plans to regulate AI early next year.

Speaking at an event in London, Philp said the U.K. government wants to take a “pro-innovation” approach to regulation, with a light-touch approach from the government.

“We intend to keep any form of regulatory intervention to a minimum," the minister said. "We will seek to use existing structures rather than setting up new ones, and we will approach the issue with a permissive mindset, aiming to make innovation easy and straightforward, while avoiding any public harm while there is clear evidence that exists.”

Despite the strategy's ambition, the lack of specific policy proposals in it means industry will be watching out for what exactly a "pro-innovation" policy will look like, according tech lobby TechUK’s Katherine Holden.

“The U.K. government is trying to strike some kind of healthy balance within the middle, recognizing that there's the need for appropriate governance and regulatory structures to be put in place… but make sure it's not at the detriment of innovation,” said Holden.

Break out, or fit in?

The U.K.'s potential revisions to its data protection rules are one sign of what its strategy entails. The country is considering scrapping a rule that prohibits automated decision-making without human oversight, arguing that it stifles innovation.

Like AI, the government considers its data policy as an instrument to boost growth, even as a crucial data flows agreement with the EU relies on the U.K. keeping its own data laws equivalent to the EU's.

A divergent approach to AI regulation could make it harder for U.K.-based AI developers to operate in the EU, which will likely finalize its own AI laws next year.

“If this is tending in a direction which is diverging substantially from EU proposals on AI, and indeed the GDPR [the EU's data protection rules] itself, which is so closely linked to AI, then we would have a problem,” said Timothy Clement-Jones, a former chair of the House of Lords’ artificial intelligence liaison committee.

Clement-Jones was also skeptical of the U.K.'s stated ambition to become a global AI standard-setter. “I don't think the U.K. has got the clout to determine the global standard,” Clement-Jones said. “We have to make sure that we fit in with the standards” he said, adding that the U.K. needs to continue to work its AI diplomacy in international fora, such as at the OECD and the Council of Europe.

In its AI Act, the European Commission also sets out a plan to boost AI innovation, but it will strictly regulate applications that could impinge on fundamental rights and product safety, and includes bans for some “unacceptable” uses of artificial intelligence such as government-conducted social scoring. The EU institutions will be far along in their legislative work on AI by the time the U.K. comes out with its own proposal.

“Depending on the timeline for AI regulation pursued by Brussels, the U.K. runs the risk of having to harmonize with EU AI rules if it doesn't articulate its own approach soon,” said Carly Kind, the director of the Ada Lovelace Institute, which researches AI and data policy.

“If the UK wants to live up to its ambition of becoming an AI superpower, the development of a clear approach to AI regulation needs to be a priority,” Kind said.

SCL /Queen Mary Global Policy Institute Discussion: AI Ethics and Regulations

I recently took part in a panel with David Satola, Lead ICT Counsel of the World Bank, Patricia Shaw, SCL Chair and CEO, Beyond Reach Consulting, Jacob Turner, Barrister and author of ‘Robot Rules: Regulating Artificial Intelligence’ and Dr Julia Ive, Lecturer in Natural Language Processing at Queen Mary. It was chaired by Fernando Barrio, SCL Trustee and Senior Lecturer in Business Law, School of Business and Management at Queen Mary and Academic Lead for Resilience and Sustainability, Queen Mary Global Policy Institute.

The regulatory and policy environment of Artificial Intelligence is one that is both in a state of flux and attracting increasing attention by policy makers and business leaders at a global scale. AI is having an impact on most aspects of government activities, business operations and people’s lives, and the proposals for letting the technology advance unregulated are under question, with increasing realization of the potential pitfalls of such unhindered development

Our chair asked the following questions

1- There has been much talk and debate about the need to set up ethical rules for the use of AI in different sectors of society and the economy; what are your views in relation to the establishment of such rules, vis a vis the possibility of enacting a regulatory framework for AI?

2- Assuming that there is a consensus about the need to regulate AI development and deployment: what should be the basis of such regulatory framework? (vg, risk-based, principle-based, etc)

3- In the same way that almost every aspect of ICT, AI posses challenges to the domestic regulation of its activities; what is the role you envisage of different levels of potential regulatory bodies, local, national, regional and/or global?

Here is the podcast of the session.

https://www.scl.org/podcasts/12367-ai-ethics-and-regulations?_se=bWFyay5vY29ub3JAZGxhcGlwZXIuY29t

Introduce a Health Data Trust to protect private medical data say Lib Dems

At our Autumn Conference, the Liberal Democrats have backed ambitious plans to safeguard private health data.

We called for the establishment of a five-point ‘Health Data Charter’, which will set out key tests for whether data sharing is in the interest of the public and the NHS. We also proposed a ‘Sovereign Health Data Trust’, which would bring together experts, clinicians and patient representatives to oversee the implementation and observance of the new charter.

Here is is what I said in introducing the proposal :

I am sure that as Liberal Democrats we all recognize the benefits of using health data which arises in the course of treating patients in the NHS: for research that will lead to new and improved treatments for disease, and for the purposes of public health and health services planning. It has been particularly beneficial in helping to improve the treatment of COVID. The collection of health data can help save lives.

However, we also strongly support the right of the individual to choose whether to share their data or not, and to understand how and why their health data is being used.

But increasingly the Government and- I am sad to say -agencies such as NHS Digital and NHSX, seem to think that they can share patient data with private companies with barely a nod to patient consent and the proper principles of data protection.

We can go back to December 2019 and the discovery by Privacy International that the Department of Health and Social Care had agreed to give free access to NHS England health data to Amazon, which allowed them to develop, advertise, and sell “new products, applications, cloud-based services and/or distributed software”.

In other words- for use with its Alexa device.

Then, take the situation that the health team found earlier this year with GP patient data. We had what has been described as the biggest data grab in the history of the health service. In May, NHS Digital, with minimal consultation, explanation or publicity and without publication of any data protection impact assessment, published its plans to share patients’ primary health care data collected by GP practices. Patients were given just 6 weeks to opt out.

As a result of campaigning by our health team and many others, including a group of Tower Hamlets GPs who refused to hand over patient data, Ministers first announced that implementation would be delayed until 1 September and then informed GPs by letter in July that the whole scheme would be put on hold, including the data collection.

As result of this bungled approach more than a million people have now opted out of NHS data-sharing.

The government have had to revise their approach by devising a simpler opt out system and committing to the publication of a data impact assessment before data collection starts again. They have also stated that access to GP data will only be via a Trusted Research Environment (TRE) and have had to commit to a properly thought through engagement and communications strategy.

But this is no way to gain public trust in how our NHS health data is used.

The Government must gain society's trust through honesty, transparency and rigorous safeguards. The data held by the NHS must be considered as a unique source of value held for the national benefit. If we are to retain public trust in the use of Health data, we need a new framework of a Health Data Charter as clearly set out in the motion. We need a guarantee that our health data will be used in an ethical manner, assigned its true value, and used for the benefit of UK healthcare.

A recent report by EY estimated this data could be worth around £10 billion a year in the benefit delivered. Any proceeds from data collaborations that the Government agrees to, integral to any ‘replacement’ or ‘new’ trade deals, should be ring-fenced for reinvestment in the health and care system with a Sovereign Health Data Trust.

Retaining control over our publicly generated data, particularly our health data, for planning, research and innovation is vital if the UK is to maintain its position as a leading life sciences economy and innovator. As we set out in the motion, all health data must be held anonymously and accessed through a Trusted Research Environment.

We need to restore credibility and trust through:

- Guaranteeing greater transparency in how patient data is handled, where it is stored and with whom, and what it is being used for.

- Allowing the public to have a say in how NHS data is used.

- Appropriate and sufficient regulation that strikes the right balance between credibility, trust, ethics and innovation.

- Ensuring service providers that handle patient data operate within a tight ethical framework.

At the moment that Trust is being lost.

Conference, our Health Data Charter and Sovereign Health Data Trust is the way to restore it.

This is the motion:

Conference believes that:

- Data collection and sharing are important and have been instrumental in advancing medical capabilities and improving population health.

- Individuals have the right to understand how and why their health data is being used.

III. Understanding and transparency is key to public trust and confidence in data sharing initiatives.

- The wealth of data held by the NHS should be used for the benefit of the health service and improving people’s health only.

- Health data should never be shared for marketing or insurance purposes.

Conference calls for the creation of a Health Data Charter that will:

i) Set out the fundamental principles and responsibilities for assessing whether a data sharing partnership is in the interest of the public and the NHS.

ii) Aim to ensure trust in the Government’s handling of health data, by laying out stringent principles that will help protect people’s privacy and their data from exploitation.

iii) Lay out ways to retain and protect the value of the nation’s health data.

Conference further calls for the creation of a Sovereign Health Data Trust that will:

a) Comprise a diverse, independent and balanced board of experts, clinicians and patient representatives which will be responsible for overseeing the implementation and observance of the Charter.

b) Have continuous oversight of all health data; with not only the power to grant access, but also the power to recall or restrict an organisation’s access if it has reason to believe that the data is not being used for public or patient benefit.

c) Be designed in such a way as to render it interoperable with the European Health Data Space in technical and in semantic terms, including through the promotion of Findable, Accessible, Interoperable and Reusable (FAIR) data principles within the NHS.

Conference endorses the Liberal Democrats’ proposed Health Data Charter as follows:

- Access to health data must be for public and patient benefit – this benefit will be defined by the Sovereign Health Data Trust with input from external experts and the public; the Trust will also determine whether an organisation can access the data for such purposes and can rescind access at any time.

- No data shall be shared with an organisation without complete transparency – all health data contracts entered into by a public body must be published publicly and in a timely manner, as should detailed minutes for all meetings, including meetings of the Sovereign Health Data Trust, and details of the organisation requesting use of the data, such as its sources of funding, ownership and intentions.

- All health data collection and sharing initiatives must be preceded by public consultation, involvement and awareness – public trust is key to any sharing of health data; this trust must be built through awareness and consultation with the public to ensure there is a solid understanding of the benefits of sharing health data with external organisations and bodies.

- The value of all health data must be retained by the NHS – people’s health data belongs to them and any value derived from it should be for everyone’s benefit; when data is used to develop new medicines or treatments, by research organisations or commercial enterprises, a share of the income generated should therefore be invested back into the healthcare system and the NHS.

- All health data must be held anonymously and accessed through a Trusted Research Environment – this Environment will be overseen by the Trust and will ensure that no data is handed over to an organisation indefinitely and therefore outside the governance of the Trust; it will also mean that personal data can be retrieved should a person wish to opt out at any time, and access granted to an external organisation withdrawn, should it be found they are not using the data as agreed.

- Consent processes must include plain language terms, and formats accessible to disabled people and people with low literacy.

Opening the new AI and Innovation Centre at Buckingham University

Great to open the new centre with the assistance of Spot the Boston Dynamics dog!

Here is what I said

I am thrilled to assist with the opening of this trailblazing Artificial Intelligence and Innovation Centre. It’s fantastic to see this collaboration between the Buckinghamshire Local Enterprise Partnership through its Growth Fund and the University which will result in pioneering AI, cyber and robotics, help to produce the next generation of leaders in computing and the development of ground-breaking technology in Buckinghamshire, working especially with the Silverstone Park and Wescott Enterprise Zone.

Many of us -including Silicon Valley Billionaires it seems-read science fiction for pleasure, inspiration, and as a way of coming to terms with potential future technology . But little did I think when young and I read Isaac Asimov’s Foundation Trilogy or watched RUR the 1920 science-fiction play by Karel Capek, that invented the word “robot”, that I would many years later be helping open an AI and Innovation Centre at a major university!

It is significant that the Centre is not just located within the School of Computing (home to Spot the Boston Dynamics Dog and Birdly, the VR bird experience) but it is part of the University’s Faculty of Computing, Law and Psychology and I was particularly delighted to hear of the existing cross disciplinary faculty work on Games and VR, Fintech law, and human robotics interaction, cyber bullying and cyber psychology.

As a lawyer, my own involvement I think demonstrates the cross disciplinary nature nowadays of so much to do with AI and machine learning. The Lords Select Committee on AI which I chaired a few years ago emphasized this in calling for an ethical framework for the development and application of AI. Working that through to regulation, nationally and internationally will involve cross disciplinary skills and knowledge. AI developers need to have a broad understanding of the societal and ethical context in which they operate as well as technical skills.

Asimov’s 3 laws of robotics were a deliberate response to the kind of situation depicted in R.U.R, so the need for ethics and regulation was conceived from the dawn of AI and robotics. You may also remember of course that the centrepiece of the Foundation Books, Hari Seldon (no relation) creates the Foundations—two groups of sociologists, scientists and engineers at opposite ends of the galaxy—to preserve the spirit of science and civilization, and thus become the cornerstones of the new galactic empire. Early cross disciplinarity!

On an international if not galactic stage however, the University can take great pride in hosting the Institute for Ethical AI in Education which I had the privilege of chairing and which developed a highly influential Ethical Framework for AI in Education which will I hope stand the test of time.

This brings me on to another aspect of cross disciplinarity- creativity. Creative skills in how to use AI and other new technology will be vital. Take for example the play “AI” recently featured in Rory Cellan-Jones’ BBC Technology blog. This is a new a play developed alongside AI using the deep-learning system GPT-3 to generate human-like dialogue and script, and as they describe it “As artists and intelligent systems collide, AI asks us to consider the algorithms at work in the world around us, and what technology can teach us about ourselves”. Reassuringly the director and Rory both concluded that human input for a fully satisfying dramatic product was still essential!

Another aspect which cross disciplinary work brings is to ensure wider perspectives to the development of AI and other new technology. Diversity in those who oversee the development and application of AI is crucial, as we highlighted in our original AI Select Committee Report. Failing this as we said we will fail to spot bias in the data sets and repeat the prejudices of the past.

Finally, I wanted to highlight the leadership that the University and Buckinghamshire LEP are showing in this fied. Our AI Select Committee were confident that the UK could and would punch well above its weight in the development of AI and play an important international leadership role in both technological and ethical aspects. I congratulate you both for your vision in setting up the Centre which looks set fair to make a huge contribution to the UK’s leadership role.

Lord C-J : More Action on Algorithmic Decision Making Needed from Government to Uphold Nolan Standards

The Lords recently held a fascinating debate on Standards in Public Life. Here is what I said about the standards we need to apply to algorithmic decision making in Government.

https://hansard.parliament.uk/Lords/2021-09-09/debates/4529BCC1-117D-4E8A-9DD8-A07CA472CD65/StandardsInPublicLife#contribution-AFAE87BA-AE61-4C0B-A79D-64627483A04F

My Lords, it is a huge pleasure to follow the noble Lord, Lord Puttnam. I commend his Digital Technology and the Resurrection of Trust report to all noble Lords who have not had the opportunity to read it. I thank the noble Lord, Lord Blunkett, for initiating this debate.

Like the noble Lord, Lord Puttnam, I will refer to a Select Committee report, going slightly off track in terms of today’s debate: last February’s Artificial Intelligence and Public Standards report by the Committee on Standards in Public Life, under the chairmanship of the noble Lord, Lord Evans of Weardale. This made a number of recommendations to strengthen the UK’s “ethical framework” around the deployment of AI in the public sector. Its clear message to the Government was that

“the UK’s regulatory and governance framework for AI in the public sector remains a work in progress and deficiencies are notable … on the issues of transparency and data bias in particular, there is an urgent need for … guidance and … regulation … Upholding public standards will also require action from public bodies using AI to deliver frontline services.”

It said that these were needed to

“implement clear, risk-based governance for their use of AI.”

It recommended that a mandatory public AI “impact assessment” be established

“to evaluate the potential effects of AI on public standards”

right at the project-design stage

The Government’s response, over a year later—in May this year—demonstrated some progress. They agreed that

“the number and variety of principles on AI may lead to confusion when AI solutions are implemented in the public sector”.

They said that they had published an “online resource”—the “data ethics and AI guidance landscape”—with a list of “data ethics-related resources” for use by public servants. They said that they had signed up to the OECD principles on AI and were committed to implementing these through their involvement as a

“founding member of the Global Partnership on AI”.

There is now an AI procurement guide for public bodies. The Government stated that

“the Equality and Human Rights Commission … will be developing guidance for public authorities, on how to ensure any artificial intelligence work complies with the public sector equality duty”.

In the wake of controversy over the use of algorithms in education, housing and immigration, we have now seen the publication of the Government’s new “Ethics, Transparency and Accountability Framework for Automated Decision-Making” for use in the public sector. In the meantime, Big Brother Watch’s Poverty Panopticon report has shown the widespread issues in algorithmic decision-making increasingly arising at local-government level. As decisions by, or with the aid of, algorithms become increasingly prevalent in central and local government, the issues raised by the CSPL report and the Government’s response are rapidly becoming a mainstream aspect of adherence to the Nolan principles.

Recently, the Ada Lovelace Institute, the AI Now Institute and Open Government Partnership have published their comprehensive report, Algorithmic Accountability for the Public Sector: Learning from the First Wave of Policy Implementation, which gives a yardstick by which to measure the Government’s progress. The position regarding the deployment of specific AI systems by government is still extremely unsatisfactory. The key areas where the Government are falling down are not the adoption and promulgation of principles and guidelines but the lack of risk-based impact assessment to ensure that appropriate safeguards and accountability mechanisms are designed so that the need for prohibitions and moratoria for the use of particular types of high-risk algorithmic systems can be recognised and assessed before implementation. I note the lack of compliance mechanisms, such as regular technical, regulatory audit, regulatory inspection and independent oversight mechanisms via the CDDO and/or the Cabinet Office, to ensure that the principles are adhered to. I also note the lack of transparency mechanisms, such as a public register of algorithms in operation, and the lack of systems for individual redress in the case of a biased or erroneous decision.

I recognise that the Government are on a journey here, but it is vital that the Nolan principles are upheld in the use of AI and algorithms by the public sector to make decisions. Where have the Government got to so far, and what is the current destination of their policy in this respect?

Lord Clement Jones calls for immediate moratorium on live facial recognition

![]()

POSTED ON BY GEORGIA MEYER

The rise of facial recognition technology has been described as “Orwellian” by Met Police Chief Cressida Dick.

Source: https://pixabay.com/illustrations/face-detection-scan-scanning-4791810/

Artificial intelligence technologies that are candidates for potential regulation were outlined in a speech to the Institute of Advanced Legal Studies’ annual conference.

A member of the House of Lords has called for an immediate halt to police use of live facial-recognition technology, during a speech to legal academics.

Liberal Democrat peer Lord Clement-Jones outlined his opposition to a range of AI technologies during his speech at the Institute of Advanced Legal Studies’ annual conference.

The peer, who is the co-chair of the parliamentary group on Artificial Intelligence, has previously proposed a private member’s bill in Parliament which seeks to ban the use of facial recognition in public places. The bill, which was tabled in February, is currently waiting for its second reading.

During his speech, Lord Clement-Jones outlined the wide variety of uses of AI in public life, many of which are positive, but highlighted certain areas that he believes should be “early candidates for regulation”.

These include the use of algorithmic decision-making in policing, the criminal justice system, recruitment processes, financial credit scoring and insurance premium setting. The peer sees the technologies as lacking transparency and accountability.

Lord Clement-Jones told the conference that current EU legislation is overly data-centric and fails to take account of the nuanced ways that algorithmic decision-making is being applied to many areas of our lives. The development of a ‘risk-mechanism’ in the law, to assess ethical dilemmas on a case by case basis, is critical, he said.

His remarks come a week after the UK Minister for Data, John Whittingdale, gave an upbeat speech about “unlocking the power of data and artificial intelligence to catalyse economic growth” in a post-Brexit UK, during his speech at the Open Data Institute.